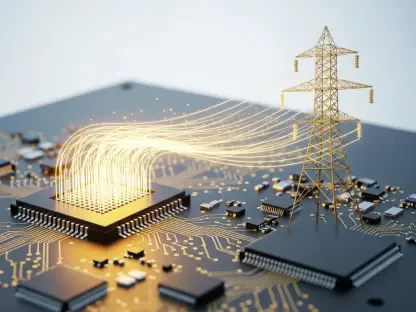

The sudden surge in artificial intelligence adoption has caught the energy sector in a state of profound transition, forcing a revaluation of the fundamental principles that have guided grid management for decades. Unlike previous eras of industrial expansion, the current shift is not merely about an increase in the volume of electrons required to power modern society; it represents a radical departure from the predictable consumption patterns that once defined electricity markets. The emergence of high-density AI data centers has introduced a level of operational complexity that traditional utility load-planning systems were never designed to accommodate, creating a structural upending of long-standing infrastructure paradigms. This transformation is driven by the fact that AI workloads do not behave like typical commercial or residential loads. Instead, they operate as highly concentrated, dynamic energy systems that demand immediate and substantial capacity, often on timelines that outpace the multi-year planning cycles used by utilities. This pressure is necessitating a shift from reactive grid maintenance to a proactive, evidence-based strategy.

The Unprecedented Scale of Energy Demand

Historically, data centers have played a minor role in national energy use, but projections from the Electric Power Research Institute suggest their share of electricity consumption could nearly quadruple by 2030. This growth is fueled by the rapid deployment of high-performance computing clusters needed for AI training and inference. As the race for market share intensifies, the sheer magnitude of power required to sustain these operations is moving from a niche concern to a primary driver of national energy policy. The shift from general-purpose cloud computing to specialized AI processing requires significantly more energy per square foot, leading to a densification of demand that is unprecedented in the history of electrification. In many major metropolitan areas, the localized concentration of these facilities is already stretching existing transmission lines to their physical limits. Consequently, utility planners are forced to abandon decades-old growth projections in favor of aggressive, high-density scenarios that account for the massive scaling of neural network training.

In practical terms, utilities are now facing individual requests for hundreds of megawatts from single customers, with some large-scale developments even targeting gigawatt-scale capacity. These massive requests, combined with aggressive timelines from developers who are racing to maintain competitive advantages, create an immense logistical strain on the companies responsible for providing reliable power. The pressure to accommodate these concentrated loads quickly is forcing a complete rethink of how utilities approach long-term capacity planning. Traditionally, adding such a significant load would involve a decade of study and construction, but the current market environment demands results in a fraction of that time. This disconnect between the speed of the technology sector and the deliberate pace of utility infrastructure development is creating a bottleneck that threatens both economic growth and grid stability. Furthermore, the sheer physical space and cooling infrastructure required for these gigawatt-scale campuses necessitate entirely new substations and high-voltage transmission upgrades that were previously unplanned.

Managing Dynamic Volatility and Interconnection Hurdles

Beyond the simple volume of energy, the qualitative behavior of AI power consumption is shifting from predictable stability to extreme volatility. Traditional utility planning relies on stable load profiles where changes are gradual and seasonal, but AI facilities act as high-density systems where power draw can fluctuate by up to 50% in a matter of moments. These swings are directly tied to the intensity of computational workloads; when an AI model begins a heavy processing task, the power draw spikes instantly. This unpredictability creates a cascading effect on cooling systems, which must work harder to dissipate heat, further increasing the load in an unplanned manner. Such volatility introduces a level of variability that standard forecasting models cannot accurately capture, leaving grid operators with a significant lack of visibility into real-time demand. Without a way to predict these surges, the risk of frequency deviations and potential equipment damage increases across the distribution network. The challenge is essentially managing a grid that is becoming less predictable every day.

These unpredictable surges complicate the interconnection process, as utilities must determine if they can support such massive loads without endangering the broader grid. In many regions, the total capacity requested in interconnection queues now dwarfs current peak demand, creating a high-stakes environment for decision-makers. Overestimating this demand leads to wasted infrastructure costs for ratepayers, while underestimating it causes grid congestion and frequent reliability failures. This precarious balance is made more difficult by the fact that many developers submit multiple requests for the same region, hoping to secure at least one connection. This “queue bloat” makes it nearly impossible for utility engineers to distinguish between serious projects and speculative ventures. Moreover, the geographic concentration of these requests often places an unbearable burden on specific nodes of the transmission system. If a utility miscalculates the impact of a 500-megawatt connection, it could lead to localized brownouts or the need for expensive, reactive infrastructure repairs.

Advanced Modeling and Regulatory Accountability

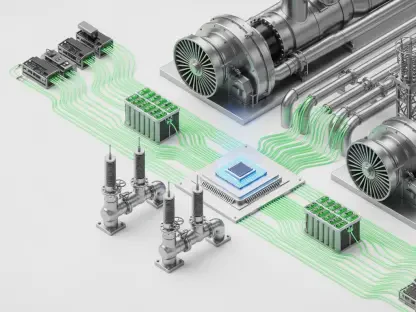

To manage these risks, the industry is moving away from static estimates toward physics-based modeling to simulate how facilities will behave under real-world conditions. By analyzing ramp rates and thermal management strategies over an entire year of operation, developers can provide utility planners with the granular data needed to identify potential bottlenecks. This evidence-based approach allows for a more resilient integration of large-scale loads into the existing power ecosystem by accounting for environmental variables like ambient temperature and humidity. Advanced software tools now allow for “digital twin” simulations of data centers, providing a playground for grid operators to test various load-shedding and demand-response scenarios. By understanding the interaction between the IT hardware and the mechanical cooling systems, planners can design more efficient interconnection agreements that include flexible demand protocols. This transition from guessing at peak loads to modeling minute-by-minute behavior is the only way to ensure the grid can handle the AI revolution safely.

Alongside these technical solutions, regulators are increasingly focused on the socioeconomic impact of AI expansion, specifically regarding who should pay for new infrastructure. There is a growing debate over whether these large energy consumers should bear a greater share of investment costs to protect residential and small-business ratepayers from price hikes. In several states, the question of fairness in investment is becoming a central theme in regulatory hearings, as communities worry about the rising costs of grid upgrades. Policymakers are exploring new tariff structures and cost-sharing models that require high-load customers to contribute more to the foundational upgrades they necessitate. At the same time, there is a push to ensure that data center growth does not stall progress toward decarbonization goals. The integration of renewable energy sources becomes more complex when paired with the massive, volatile demand of AI facilities. Balancing these economic, social, and environmental factors requires a transparent dialogue between all stakeholders to ensure the benefits of AI are not outweighed by grid instability.

The Path Forward: A Framework for Shared Responsibility

The transition toward an AI-integrated grid was marked by a fundamental shift in how the industry viewed the relationship between technology and infrastructure. Utilities recognized that the old ways of static planning were no longer sufficient, and developers realized that their growth was inextricably linked to the health of the power grid. This era of transformation saw the adoption of more transparent data sharing, where the veil between data center operations and grid management was finally lifted. Collaborative efforts led to the creation of more resilient transmission networks that were capable of absorbing massive, sudden loads without failure. Stakeholders engaged in a process of mutual education, where energy providers learned the nuances of liquid cooling and GPU workloads, while tech giants contributed to the financing of the very substations they utilized. The regulatory landscape also matured, implementing frameworks that rewarded efficiency and punished wasteful consumption. This collective movement ensured that the power system remained a robust foundation for technological advancement rather than a bottleneck for innovation.

Moving into the future, the focus turned to implementing decentralized energy solutions and local generation to mitigate the strain on long-distance transmission. Data centers began integrating on-site battery storage and microgrids, allowing them to act as partners in grid stability rather than just consumers. These facilities evolved to provide ancillary services, such as frequency regulation and spinning reserves, effectively turning a potential liability into a valuable grid asset. The next logical step involved the standardization of performance metrics, ensuring that every new AI campus met strict criteria for both energy efficiency and grid responsiveness. Regulators promoted policies that encouraged the siting of data centers in regions with excess renewable capacity, optimizing the geographic distribution of load. This strategic alignment of interests created a more sustainable path for the expansion of digital intelligence. The ultimate success of this transition was found in the ability of disparate industries to align their goals, ensuring that the electrical grid remained reliable, affordable, and ready to power the next generation of discovery.