The physical manifestation of the artificial intelligence revolution is no longer found in sleek consumer gadgets, but in sprawling, humming industrial complexes that consume more electricity than most medium-sized cities. As we navigate the current landscape, the traditional enterprise data center, once a quiet backbone of corporate IT, has been fundamentally eclipsed by high-density AI clusters designed to process trillions of parameters in real time. This shift is not merely a scaling exercise; it represents a total architectural pivot that forces us to reconsider how we build, cool, and power the modern world. The context of this evolution lies in the insatiable demand for generative intelligence, which has pushed hardware requirements far beyond the limits of standard server racks.

What makes this implementation unique is the departure from general-purpose computing toward specialized, hyper-dense environments. In the past, data centers were built for a variety of tasks—storing emails, hosting websites, or running databases—where workloads were sporadic and manageable. Modern AI infrastructure, however, is a monolithic beast characterized by sustained, peak-load processing. This evolution has moved the industry into a new epoch where the bottleneck is no longer just the speed of the processor, but the physical ability of a building to ingest and dissipate immense amounts of energy.

The Evolution of AI-Optimized Computing Environments

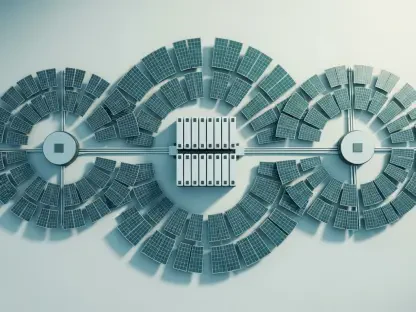

The transition from traditional server rooms to AI-optimized environments has been driven by the specialized nature of neural network training. Unlike standard applications that rely on central processing units (CPUs) for sequential tasks, AI workloads require massive parallelism. This necessitated the rise of the GPU and TPU-centric cluster, where thousands of interconnected chips work as a single unified brain. This emergence has rewritten the rulebook for infrastructure design, moving away from low-density cabinets toward high-performance compute nodes that generate unprecedented heat signatures.

Moreover, the broader technological landscape is now grappling with the reality that software efficiency alone cannot solve the resource problem. As models grow in complexity, the hardware supporting them must become increasingly bespoke. This specialization means that the facilities being built today are fundamentally different from those constructed even a few years ago. They are no longer just “buildings with computers” but are essentially massive, integrated cooling and power distribution machines that happen to house silicon.

Architectural Foundations and Energy Dynamics

High-Density Compute and Processing Clusters

The heart of the modern AI data center lies in its specialized processing clusters, primarily powered by advanced GPUs and TPUs. These chips are designed to handle the matrix multiplications that define deep learning, but their performance comes at a heavy cost in terms of power draw. Unlike a typical server that might pull 500 watts, a single AI-optimized rack can now demand upwards of 100 kilowatts. This concentration of power matters because it creates a thermal density that would melt traditional air-cooled infrastructure in minutes.

The unique aspect of these clusters is the interconnectivity between nodes. For a large language model to function, data must move between thousands of chips with near-zero latency. This requires a complex web of fiber optics and specialized switching fabrics that operate at speeds previously reserved for supercomputing labs. This implementation is distinct from competitors in the cloud space because it prioritizes raw throughput and memory bandwidth over multi-tenancy or general-purpose flexibility.

Thermal Management and Liquid Cooling Systems

As power density skyrockets, air cooling has reached its physical limit. The industry is rapidly pivoting toward liquid cooling solutions, where non-conductive fluids or water-chilled plates are placed in direct contact with the processors. This technology is a game-changer, capable of reducing the energy overhead required for cooling by nearly 40%. By removing the need for massive, energy-hungry fans and air-handling units, operators can dedicate more of the facility’s power budget directly to the silicon.

Liquid cooling is not just an efficiency play; it is a necessity for the survival of the hardware. High-density chips operating at peak capacity generate heat so localized that air simply cannot move fast enough to carry it away. Advanced immersion cooling, where entire server blades are submerged in a dielectric fluid, represents the current frontier of this trend. While the initial capital expenditure is significantly higher than traditional setups, the long-term operational savings and the ability to pack more compute into a smaller footprint make it the only viable path for future AI scaling.

Emerging Trends in Power Consumption and Grid Integration

The most pressing trend in this sector is the astronomical jump in facility power requirements, moving from a standard 50 MW capacity to massive 300 MW installations. This surge has created a precarious relationship with the public power grid. Utilities are struggling to keep up with the pace of demand, leading to “grid bottlenecks” where a data center might be physically completed but forced to wait years for a high-voltage interconnection. This delay has fundamentally shifted the market, making power availability—not location or tax incentives—the primary factor in site selection.

In response, industry players are increasingly looking toward “always-on” carbon-free energy sources to bypass the instability of the traditional grid. We are seeing a move toward direct energy sourcing, where tech giants invest in modular nuclear reactors or dedicated geothermal plants. This shift is unique because it positions tech companies as pseudo-utilities, taking direct control over their energy supply chains to ensure that their “always-on” infrastructure never experiences a brownout due to local grid instability.

Real-World Applications and Sector Deployment

In the finance and healthcare sectors, these high-density clusters are being deployed to handle everything from real-time fraud detection to the simulation of complex protein folding. In finance, the speed of inference can mean the difference between a successful trade and a billion-dollar loss. Healthcare providers are using this infrastructure to run genomic sequencing at scales that were previously cost-prohibitive. These applications prove that the massive energy spend has a tangible, high-value output, justifying the environmental and financial costs for high-stakes industries.

Furthermore, we are witnessing a geographical relocation of infrastructure to regions like the Nordics or the Pacific Northwest, where hydroelectric and geothermal power are abundant. This is not just about being “green”; it is a strategic maneuver to secure the cheapest and most reliable baseload power available. Simultaneously, decentralized edge computing is emerging as a counter-trend, where smaller AI nodes are placed closer to the end-user in urban centers to reduce latency for applications like autonomous driving and augmented reality.

Technical Constraints and Sustainability Challenges

The “sustainability paradox” remains the most significant hurdle for the industry. While AI is being touted as a tool to solve climate change through better resource management, the infrastructure required to run it is currently driving a massive increase in carbon emissions. Corporate net-zero targets are increasingly at odds with the physical reality of the grid, which still relies on natural gas and coal to provide stable power when renewables underperform. This tension creates a reputational and regulatory risk that companies are desperate to mitigate.

To combat this, the industry is experimenting with Direct Air Capture (DAC) and high-quality carbon removal credits. However, these are often viewed as “band-aid” solutions. The real challenge lies in the unavoidable reliance on fossil fuels during periods of peak demand. Some operators are exploring long-duration battery storage or green hydrogen, but these technologies are not yet mature enough to support a 300 MW facility for extended periods. The trade-off between rapid AI advancement and environmental responsibility is a tightrope walk that has yet to be fully mastered.

Future Outlook and the Low-Carbon Transition

The future of AI infrastructure lies in its potential to act as a catalyst for a modernized, clean energy grid. Rather than being a mere consumer, data centers are beginning to function as flexible loads that can stabilize the grid by adjusting their consumption during peak times. Breakthroughs in long-duration energy storage will likely allow these facilities to store excess solar and wind power, releasing it back to the community when needed. This transition would turn a perceived environmental villain into a cornerstone of a more resilient and modern power architecture.

We are also moving toward a more circular energy economy. Some facilities are already being designed to capture the waste heat from liquid cooling systems and pump it into local district heating networks, warming homes and offices. This level of integration suggests that the AI data center of the future will not be an isolated fortress, but a deeply integrated part of the urban and energy landscape, contributing as much to the physical well-being of a region as it does to its digital capabilities.

Summary of Findings and Industry Impact

The review of current AI data center infrastructure revealed a tenfold increase in energy density and a critical shift toward direct energy investment by technology firms. The industry moved away from traditional cooling methods in favor of high-performance liquid systems, a transition that was essential for maintaining hardware integrity under extreme loads. While the grid bottleneck posed a temporary threat to expansion, it also forced a more creative and aggressive approach to energy independence and sustainability.

Ultimately, the technology functioned as a double-edged sword in the global energy transition. It successfully pushed the boundaries of what was computationally possible, enabling breakthroughs in science and finance, yet it did so at a significant environmental cost. The sector demonstrated a remarkable ability to innovate its way out of thermal constraints, but the challenge of absolute carbon neutrality remained an ongoing struggle. As the infrastructure became more integrated with renewable energy sources, the long-term impact pointed toward a more robust and decentralized global power network.