Christopher Hailstone brings a wealth of expertise to the table as a seasoned veteran in energy management and electricity delivery. Having spent decades navigating the complexities of grid reliability, he has become a leading voice on how utility infrastructure must evolve to meet the sudden, massive demands of the digital age. As our resident utilities expert, he offers a unique perspective on the intersection of national security, renewable integration, and the unprecedented surge in power consumption driven by artificial intelligence. In this conversation, we explore the immense pressure facing the Midcontinent Independent System Operator (MISO) as it prepares for a future where data centers become the dominant force on the electrical landscape.

Peak load is expected to jump from 121 GW to 163 GW by 2035. What specific infrastructure upgrades are essential to handle a 35% increase, and how do grid operators prioritize these projects when data center construction timelines are so aggressive? Please provide a step-by-step breakdown of the planning process.

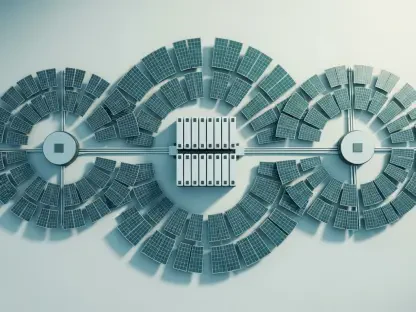

To accommodate a staggering 35% increase in peak load, moving from 121 GW to 163 GW, we have to look far beyond simple wire replacements and focus on massive transmission build-outs and high-voltage substations. This involves reinforcing the 345-kV and 765-kV backbones to ensure power can flow from generation-rich areas to the high-density hubs where these servers are humming day and night. The prioritization process starts with a “high confidence” screening, where we look for projects that already have firm interconnection agreements and have cleared the regulatory hurdles. We then move into a multi-phase planning cycle: first, we conduct a steady-state and stability analysis to see where the grid would literally buckle under the 163 GW load; second, we identify the specific transmission “bottlenecks” that prevent energy flow; and finally, we fast-track the engineering for those critical nodes. It is a race against time because a data center can be built in eighteen months, while a major transmission line often takes seven to ten years to permit and construct, creating a palpable tension in every planning meeting I attend.

Future power demand is heavily tied to the profitability of the emerging AI industry and the transparency of project pipelines. How should utilities manage the financial risk of stranded assets if these projects fail, and what specific metrics currently define a “high confidence” project versus a speculative one?

Managing the financial risk of stranded assets is currently the top priority for utility executives who are haunted by the memory of previous industrial booms that went bust, leaving ratepayers to foot the bill. To mitigate this, we are seeing a shift toward much more aggressive contractual commitments, such as requiring hyperscalers to provide larger upfront deposits and “take-or-pay” energy contracts that guarantee revenue even if the facility’s load drops. We define a “high confidence” project through three very specific metrics: the existence of an executed interconnection agreement, formal awareness and acknowledgment from state regulators, and the physical reality of construction already being underway. Speculative projects, by contrast, are often just “nameplate” requests sitting in a queue without secured land or equipment orders, and we have to be careful not to over-build infrastructure for a ghost project. The sensory reality of seeing steel in the ground and cranes in the air is often the most reliable data point we have in an era of opaque project pipelines.

Forecasts show the Central region growing at 2.7% annually, significantly faster than the Southern region at 1.9%. Why is data center development concentrating in states like Illinois and Indiana, and what unique transmission challenges arise when load growth is so geographically uneven?

The concentration of development in states like Illinois and Indiana is driven by a perfect storm of favorable tax incentives, relatively lower land costs, and a pre-existing network of fiber-optic corridors that act as the nervous system for the internet. These states also offer a cooler climate for part of the year, which reduces the massive energy toll required for cooling those dense server racks, but this regional lopsidedness creates a logistical nightmare for the grid. When the Central region grows at 2.7% while the South lags at 1.9%, we see a dramatic shift in the “gravity” of the grid, requiring us to push enormous amounts of power across state lines that weren’t designed for such heavy, one-way transfers. This geographical imbalance forces us to invest in sophisticated voltage control technologies and massive transformers to prevent the local distribution systems in the Midwest from being overwhelmed by the sheer thirst of these new industrial neighbors.

Moving toward dynamic planning models helps utilities distinguish between speculative and committed loads. What specific steps should regulators take to increase data transparency, and how can they adapt to rapid technological shifts without compromising the reliability of the grid for residential customers?

Regulators need to move away from the static, five-year planning cycles of the past and embrace a model where data transparency is mandated through a centralized clearinghouse for large-load requests. Currently, the “opaque” nature of these project pipelines means we are often flying blind, so regulators should require developers to share more granular operational and metered data as a condition of their grid access. To protect residential customers, we must implement “ring-fencing” mechanisms that ensure the costs of these massive upgrades are borne by the data center developers rather than being socialized across the monthly bills of a family in a three-bedroom home. We also need to build “right-sizing” clauses into our permits, allowing us to adjust the planned infrastructure if the expected AI-driven demand fails to materialize, ensuring the grid remains both robust and affordable.

Data centers are projected to consume a quarter of all electricity in some regions by 2040. How does this massive shift impact the long-term cost and availability of power for the manufacturing sector, and what trade-offs are necessary to balance these competing industrial interests?

When data centers are projected to consume 25% of all electricity by 2040, it creates a fierce competition for every available megawatt, which naturally puts upward pressure on wholesale power prices. For the manufacturing sector, which often operates on razor-thin margins and requires steady, high-load power for assembly lines and smelting, this surge in demand can feel like a direct threat to their survival. The trade-offs are painful; we may have to prioritize “firm” power for manufacturers that provide thousands of local jobs while asking data centers to participate in aggressive demand-response programs where they power down during peak events. It is a delicate balancing act where we are essentially triaging the economy, trying to ensure that the digital future doesn’t come at the expense of the physical goods and industrial base that remain the backbone of our communities.

With 8 GW to 14 GW of data centers expected to come online in the next two years, execution risk is at an all-time high. Can you share an anecdote or example of how supply chain constraints or tariff changes have recently forced a utility to pivot its load forecast?

The execution risk is almost palpable when you realize that 8 GW to 14 GW is the equivalent of adding several massive nuclear plants’ worth of demand in just twenty-four months. I recently worked with a utility that had to completely scrap its three-year forecast because a sudden change in international tariffs spiked the price of high-voltage transformers by nearly 40%, while delivery lead times pushed out to an incredible four years. They were standing there with a signed agreement for a 500-MW data center but realized they literally could not get the hardware to connect it to the high-voltage grid within the developer’s timeline. This forced an emergency pivot where the utility had to tell the developer to slow down, and it served as a wake-up call that our load forecasts are only as good as our ability to source the physical copper, steel, and silicon needed to build the connection.

Do you have any advice for our readers?

The most important thing to understand is that the electrical grid is no longer a “background” service that we can take for granted; it is now the primary constraint on our technological and economic growth. My advice to readers, whether they are investors, policymakers, or concerned citizens, is to advocate for “agile” infrastructure—meaning we need to support regulatory reforms that allow utilities to build transmission faster and smarter. We are entering an era where energy literacy is essential, so stay informed about your local utility’s long-term integrated resource plans and pay attention to how they are balancing the “AI gold rush” with the need for stable, affordable residential service. The transition to a 163 GW peak load is possible, but it requires a level of public and private cooperation that we haven’t seen since the original electrification of the country a century ago.