Christopher Hailstone is a seasoned strategist in energy management and electricity delivery, renowned for his work on grid reliability and the integration of renewable resources. As a leading utilities expert, he has navigated the complexities of modernizing aging infrastructure while ensuring that new technologies can be deployed safely and efficiently. His deep understanding of regulatory frameworks and the technical nuances of the power grid provides a critical perspective on the evolving landscape of energy production and storage.

In this discussion, we explore the emerging role of surplus interconnection service (SIS) as a vital tool for expanding grid capacity. We touch upon the legislative shifts in states like Virginia and Indiana, the economic advantages of utilizing existing brownfield sites, and the technical hurdles of co-locating disparate energy technologies. The conversation also highlights the stark differences in how various regional grid operators handle hybrid resources and the potential for these systems to power high-demand users like data centers.

New laws require utilities to assess spare capacity at existing power plants to bring energy online faster. How does this shift the burden of proof for grid access, and what specific data points should developers prioritize when identifying potential brownfield sites for development?

The legislative shift we are seeing, particularly with the FAST Act in Virginia and SB 240 in Indiana, effectively moves the responsibility of discovery onto the utilities themselves. Instead of developers guessing where capacity might exist, utilities like Dominion Energy or Appalachian Power are now mandated to proactively identify available interconnection rights at their existing and planned intermittent facilities. For a developer, this means the initial “burden of proof” is lightened because the utility must provide a transparent baseline of what the grid can actually handle at a specific node. When scouting these brownfield sites, developers should prioritize data points like the historical peak injection versus the rated interconnection limit, as well as the physical footprint available for new hardware. Identifying sites with underutilized thermal assets or “infrequently used gas-fired peaker plants” is a goldmine because it allows for the addition of solar or storage that can turn a part-time facility into a daily contributor.

Reviews for surplus interconnection can take under a year compared to the multi-year standard process, often at a fraction of the cost. What technical factors determine whether a project qualifies for this fast-track, and how do these savings influence a developer’s initial bidding strategy?

The primary technical qualifier for this fast-track is that the new resource must not exceed the existing facility’s total interconnection rights; essentially, you aren’t asking for a bigger pipe, just a better way to fill the one that is already there. Because these requests are studied outside the standard, often bogged-down interconnection queues, the timeline drops from several years to usually less than twelve months. The financial implications are staggering when you consider that a standard solar project in Kansas might face interconnection costs of $333.42 per kW, whereas a nearby SIS solar project can see those costs plummet to just $0.71 per kW. This massive reduction in capital expenditure allows developers to be much more aggressive in their bidding strategy for power purchase agreements. They can offer lower electricity rates while still maintaining healthy margins, essentially pricing out competitors who are stuck in the traditional, expensive queue.

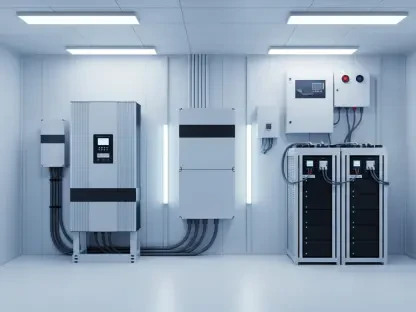

Adding battery storage to a wind farm or solar panels to a gas peaker site can maximize existing infrastructure. What are the primary engineering hurdles when integrating these different technologies, and how does this co-location impact the long-term capacity value of the original asset?

The engineering hurdles are primarily centered on the sophisticated control systems required to manage the synchronized output of two different generation types through a single point of interconnection. You have to ensure that the combined output never surges past the permitted limit, which requires real-time monitoring and automated curtailment software. However, the payoff is significant because adding storage to a wind or solar farm transforms it into a dispatchable resource, which inherently carries a much higher capacity value. For example, a gas peaker plant that only runs during extreme heat or cold can be paired with solar panels to provide steady, clean energy during the day, effectively “sweating” the asset and increasing its overall utility. This co-location doesn’t just maximize the infrastructure; it secures the asset’s relevance in a market that increasingly rewards reliability and flexibility over simple bulk generation.

While some regions see thousands of megawatts in surplus requests, others struggle with low adoption due to complex participation rules. How do differences in how markets treat hybrid resources affect project financing, and what specific policy changes would encourage more developers to utilize existing rights?

The disparity between markets like MISO, which is currently studying nearly 8,960 MW of surplus projects, and PJM, which has only seen 14 requests since 2023, boils down to how hybrid resources are treated. In PJM, a developer is often forced to participate as a single hybrid resource, which can be a nightmare for financing because it requires renegotiating the off-take or debt terms of the original, existing project to merge it into a new entity. In contrast, MISO and SPP allow these projects to receive capacity credit while functioning as separate market entities, which is far more attractive to lenders who want clear, unencumbered collateral. To encourage more development, we need a policy shift that decouples the physical interconnection from the financial participation model. If PJM adopted a structure where the new storage or solar could be financed and managed independently while sharing the physical connection, we could unlock a massive portion of the 150 GW of potential supply that researchers have identified.

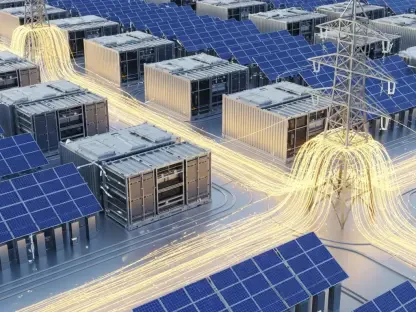

Proposals exist to link surplus interconnection capacity directly with high-demand users like data centers. What are the practical steps for a utility to facilitate this transition, and what role do pilot programs play in proving these configurations won’t compromise grid reliability?

The first practical step for a utility is to conduct a granular audit of its transmission capacity, much like what Maryland is proposing, and then share that data directly with large-scale consumers like data center developers. This creates a “plug-and-play” environment where a data center can be built adjacent to an existing power plant with known surplus capacity, bypassing the need for major new transmission lines. Pilot programs, such as those mandated by the Virginia FAST Act, are essential because they act as a controlled laboratory to test how high-demand loads interact with variable surplus resources like storage and solar. These pilots provide the real-world data needed to convince skeptical grid operators that the system can handle the sudden load swings of a data center without triggering local reliability issues or voltage instability. By proving the concept through small-scale, successful deployments, utilities can build the institutional confidence necessary to scale these configurations across the entire grid.

What is your forecast for surplus interconnection service?

I forecast that surplus interconnection service will become the dominant pathway for new capacity over the next five years, especially as we see it successfully deployed in PJM-footprint states. We are currently looking at a massive backlog of nearly 10,000 MW in SPP and over 8,000 MW in MISO, and as these projects come online and prove their reliability, other regions will have no choice but to follow suit. Within the next decade, I expect that every new power plant proposal will be required by law to include an SIS analysis, as we are seeing with Indiana’s 2030 mandate. This will lead to a “densification” of our energy grid, where existing sites become multi-technology hubs, significantly reducing the environmental footprint and the time required to meet our skyrocketing electricity demands. Ultimately, the sheer economic pressure of $0.71 per kW interconnection costs versus $333 per kW will force a regulatory evolution that makes SIS the standard, rather than the exception.