The rapid proliferation of massive large language models has fundamentally transformed the electrical profile of modern computing facilities, turning once-predictable server farms into volatile energy consumers that challenge the very limits of our aging national grid. Unlike the steady, linear power consumption seen in traditional cloud computing environments, artificial intelligence workloads are characterized by intense, rapid-fire spikes in demand followed by precipitous drops. This erratic behavior creates a unique set of technical difficulties that the current energy infrastructure was never designed to accommodate, leading to a mounting friction between tech giants and utility providers. As 2026 progresses, the sheer scale of these deployments has moved the conversation from theoretical efficiency gains to an urgent requirement for physical resilience. Grid operators are finding that the standard methods of forecasting demand are increasingly obsolete when confronted with the localized volatility of high-density AI clusters.

Hidden Failures and the Industry Silence

Engineers working on the front lines of data center maintenance are increasingly documenting a disturbing trend of premature equipment failure that remains largely shielded from public scrutiny. While companies are eager to showcase their latest computational breakthroughs, they are significantly less inclined to discuss the physical toll that erratic power swings take on internal electrical assets. High-frequency load fluctuations accelerate the thermal degradation of transformers, switchgear, and uninterruptible power supply systems, forcing replacements long before their intended service lives have concluded. This behind-the-meter instability is often managed through expensive, stop-gap troubleshooting that masks the underlying structural deficiency of the facility. By keeping these failures private, the industry risks delaying the necessary transition to more robust power management designs that can withstand the rigors of modern machine learning operations.

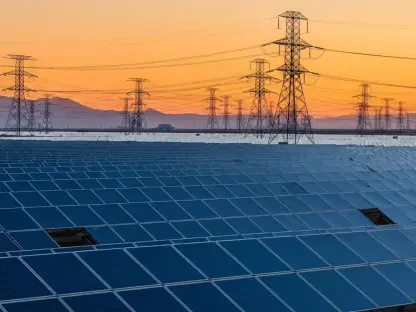

Beyond the walls of the data center, the stress of these erratic workloads is beginning to manifest as a genuine threat to regional grid stability and community energy security. Some utility companies have already taken the drastic step of disconnecting or delaying the energization of new data center phases after realizing that the resulting power spikes could destabilize local feeders. This volatility creates a ripple effect, where sudden drops in AI power demand can cause voltage surges that potentially damage other critical infrastructure connected to the same substation. The prevailing strategy of simply requesting more capacity from the utility has reached a breaking point, as the issue is no longer just the volume of electricity required but the quality and consistency of the delivery. Consequently, the relationship between data center developers and power providers has shifted toward a more cautious and at times adversarial dynamic.

Engineering a Stable Future: Energy Buffering

To bridge the widening gap between traditional power delivery and modern computational demands, the industry is looking toward an evolution in infrastructure architecture that prioritizes volatility buffering. It is becoming clear that simply oversizing existing systems or relying on legacy battery configurations is an inadequate solution for the intense cycling required by generative AI. A new class of long-duration energy storage systems is emerging as a critical stability layer, designed to sit between the facility and the utility grid. These systems are engineered to absorb the shock of massive energy swings instantly, preventing them from bleeding back into the main grid or taxing internal hardware. Unlike standard lithium-ion setups that might suffer from cell balancing issues under constant stress, these specialized buffers can operate for hours and handle deep, frequent discharges without performance loss. This intentional design provides a necessary cushion for the grid.

The industry ultimately recognized that the transition to artificial intelligence necessitated a fundamental rethink of how energy was managed at the source. This realization prompted a shift away from reactive troubleshooting toward the implementation of integrated, long-duration storage solutions that stabilized the power interface. Facilities that adopted these buffering technologies saw a marked decrease in equipment failure rates and improved their standing with utility regulators by minimizing their impact on the surrounding community. Leaders in the sector moved beyond the era of private maintenance crises and established new standards for infrastructure that could survive the high-frequency demands of the modern era. By prioritizing physical resilience alongside computational power, developers ensured that the expansion of digital capabilities did not come at the expense of grid reliability. This shift proved essential for the sustainable growth of large-scale AI operations.